I wanted to write a blog post about some of the thoughts I’ve been having about character backstory / history generation techniques. I don’t claim they’re original, but I think they’re pretty powerful!

There’s a great quote from the makers of Caves of Qud on their history generation approach that gives a really good organizational framework for this post, so I’m gonna start with it:

“There’s a distinction to be made between history as a process and history as an artifact. The former can be conceptualized as the playing out of rules and relationships that continually produce the present. To simulate this process, we might seek to reproduce its logic. On the other hand, the latter is a constituent of the present, something we engage with through a contemporary lens and whose complexities may be obscured by that flattening of perspective.”

(Subverting historical cause & effect: generation of mythic biographies in Caves of Qud)

I’m going to take these two approaches, apply it to a technique I call “recursive narrative scaffolding”, and talk about each approach’s advantages and disadvantages.

The Technique: Recursive Narrative Scaffolding

The general approach isn’t one I’m claiming is unique, and it may go by other names elsewhere, but the name I use for it is recursive narrative scaffolding. The idea is pretty simple, but can give rise to complex structures if built out and designed correctly.

If you’ve played the indie RPG Microscope, well, it’s that thing. Scaffolds are a generalized narrative building block that can stand in for a Period, Event, or Scene, depending on how large a time period it tackles.

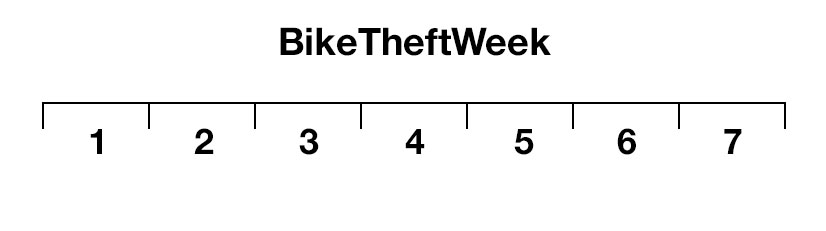

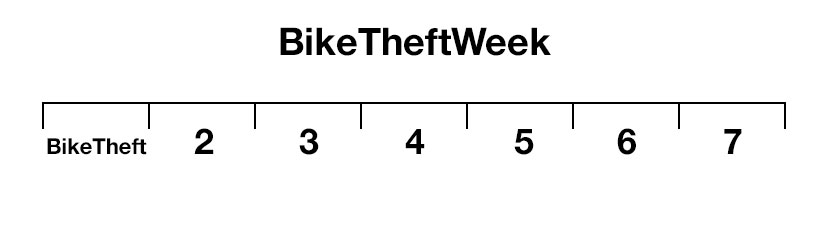

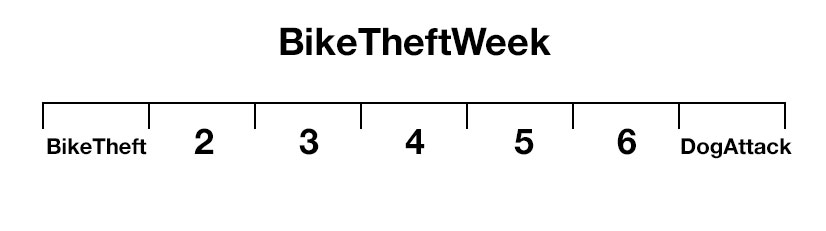

Here’s a quick example. Say you have an event for 7 timesteps. Let’s call it BikeTheftWeek.

At the beginning of BikeTheftWeek, you saw someone stealing your bike. Before you could stop them, they rode off laughing. The jerk! We’ll call this event BikeTheft.

You went through the rest of your week as usual. But then on Friday, you saw your bike! The person that stole it was on the ground, and a dog was biting his pant leg. Ha ha, take that!

So, modeling this, we would say BikeTheftWeek has 7 timesteps (one for each day) and at timestep 1 the sub-event BikeStolen happened. A bunch of other stuff happened for timesteps 2-6 that we don’t care about right now, and on timestep 7 DogAttack happened to the bike thief.

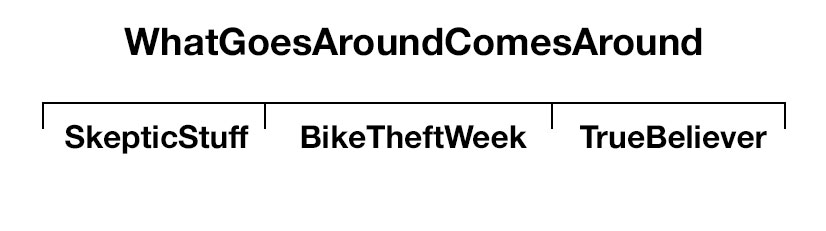

You could zoom out and imagine an even bigger scaffold, something like WhatGoesAroundComesAround, where BikeTheftWeek is a sub-event that happens in the middle of it, with the beginning containing an event (or events) where the character demonstrates their skepticism of fate, then an ending where they come to believe in it.

There’s no limit to the amount you zoom in or out. You can have one event which is “GoodLife” that encapsulates an entire character’s life, or one that’s “CharacterBlinks” that’s only one second long. You can adjust the scope up and down depending on where you want to put content. In my current game Delve (working title), there is one root event called “History”, which lasts as long as the simulation, and involves every character. Everything else from there is just…sub-division.

That’s it! So now that we have an approach, let’s talk about two different types of implementations: history as artifact, and history as process.

History as Artifact: Dwarf Grandpa and Rationalization

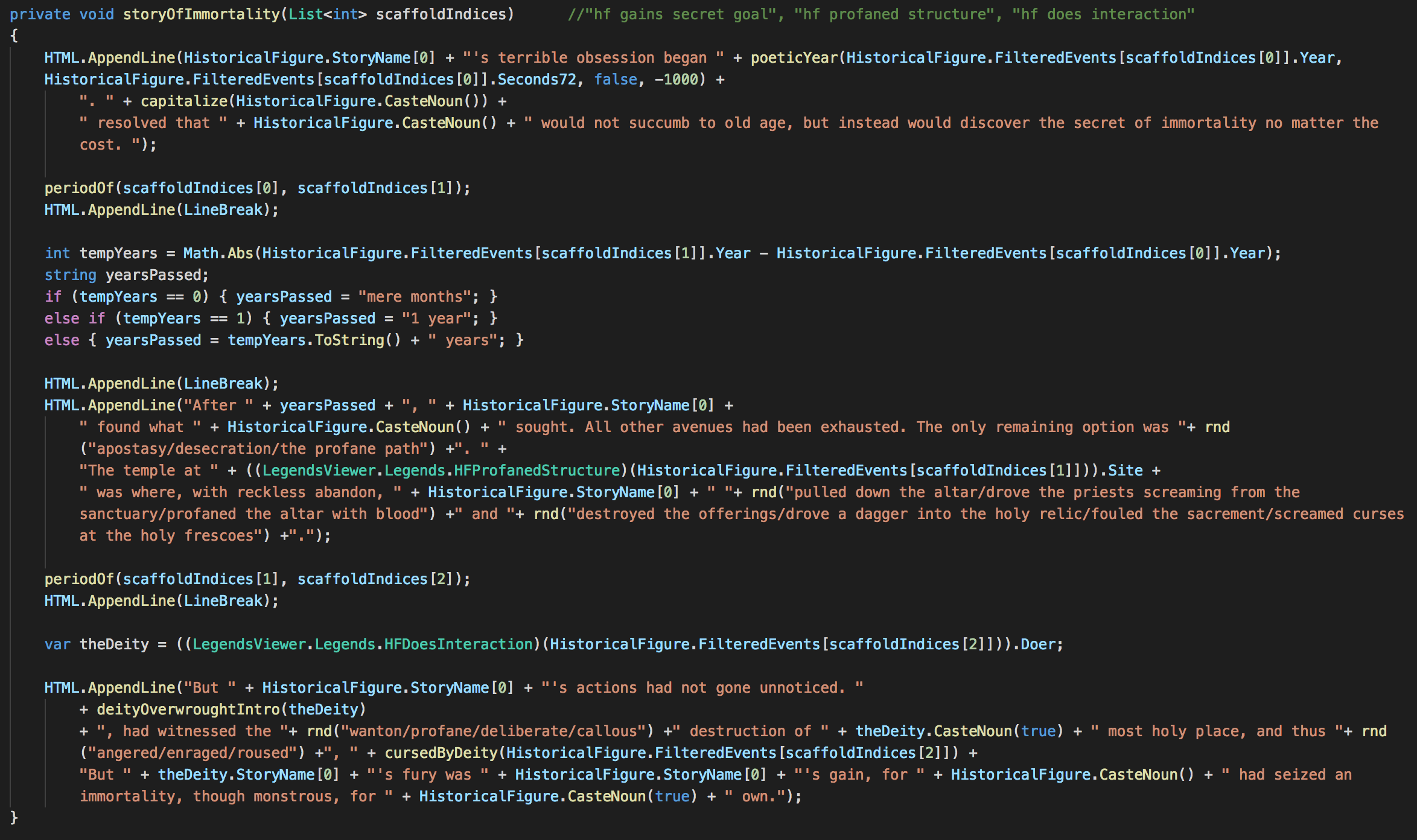

I made this thing back in 2014 I call Dwarf Grandpa. It was just a prototype mod to Legends Viewer for Dwarf Fortress, so I won’t talk it up much. But basically, it took the events exported from Dwarf Fortress, and wrote character stories from them.

Actually, stories for all kinds of characters is a big ask, so it just did stories for vampires.

Actually, stories for all vampires is a big ask. It did certain stories for certain types of vampires in Dwarf Fortress.

Basically, I made a small library of scaffolds. In this implementation, a scaffold was a grouping of sub-events that were ordered in time, but not in strict increments (i.e. I wouldn’t care if “Eat” came 2 or 3 or 50 timesteps after “Hunt”, I just cared if “Eat” came after “Hunt”). These scaffolds had associated contextualized grammars that could read simple data from state (like which characters were involved, etc).

Once I had a library, I ran pattern matching over the events for each character in the exported Dwarf Fortress history, checking to see if I could apply a scaffold to it. If so, then I would run the simple grammar for it, and include it in the character’s bio.

The system would start from scaffolds that could account for the largest swath of a character’s life, down to the smallest. Scaffolds could overlap, etc.

You could imagine using this approach if your game puts more emphasis on simulation, so you have a rich data model to draw from, and you know in advance that the events recorded are following some sort of logic, so you don’t have to gate a lot of pre-condition logic on the scaffolds. Essentially, this is providing a “human-readable” version of the simulation processes, with extra flavor added.

Caveats

The generated events for characters must not be random. They in fact follow a rigorous simulation logic, so the “facts” of the stories are already baked in. I can rely on the simulation to not give me an event set where the character dies in the middle, or somehow is in two places at once, etc. The conventions of the simulation logic are the bare minimum conventions of the storytelling about that world, so they do the heavy lifting for me.

You have lots of material per character. This worked for me because Dwarf Fortress exports a crapload of event data for characters. Especially vampires, holy cow. You can get enough material to tell an interesting bio using only 1% of their life events, given there are so many. This made the bar for generating surface text pretty low, which was cool. We could filter the hell out of the events and still have plenty of material left over.

Why Is This Cool?

Using this approach, it’s fairly straightforward (I won’t say easy) to implement a system where there is a subjective notion of history, and those notions can be unique to different character perspectives.

Imagine a set of global scaffolds you can apply, then culture-specific scaffolds, then character-specific scaffolds, then state-specific scaffolds. Maybe there’s a character who reads lots of romances. If you write lots of romance-specific patterns for their use, then when you have a player ask them to describe a brooding, aloof character, they may come up with a very different interpretation of said character’s history than if you asked someone who hates people that brood. None of the actual motivations or things referenced in the event text need necessarily be modeled in the simulation, so long as they’re consistent. That way, you always have a core you can work from.

Conflicting accounts! That conflict in consistent ways, that you could even surface to the player! You could have two characters witness a murder, one who’s a pessimist, and another who believes in justice in the hereafter. When asked to describe the event, the pessimist would consult their library of scaffolds, output the text “Someone was murdered–which just goes to show that life is short and brutal.” and you could surface why to the player with something like “(Char1 is a pessimist)“. The other character would have access to scaffolds that would render text “Someone was murdered–but the murderer will get what’s coming to them, in life or the hereafter” with the explanation “(Char2 believes in justice in the hereafter)”.

Again, the power of this approach is in the scaffold library, which can be gated on groupings of characters on culture, socio-economic status, disposition, or even dynamic tags that happen as a result of the simulation, like “survived trauma” or “wonderful childhood”. It makes me think of all the cool work Fox Harrell does with cultural phantasms. And if you don’t know what I’m talking about, you need to read Phantasmal Media pronto.

Simulation is a lot of work, though. “I’m just doing this for the stories, man. I don’t care if this character has a negative trust value with this other character. Who has time to model all that?”

Well yeah, ok fair. Consider this other approach:

History as Process: Delve (working title) and Recursive Pooling

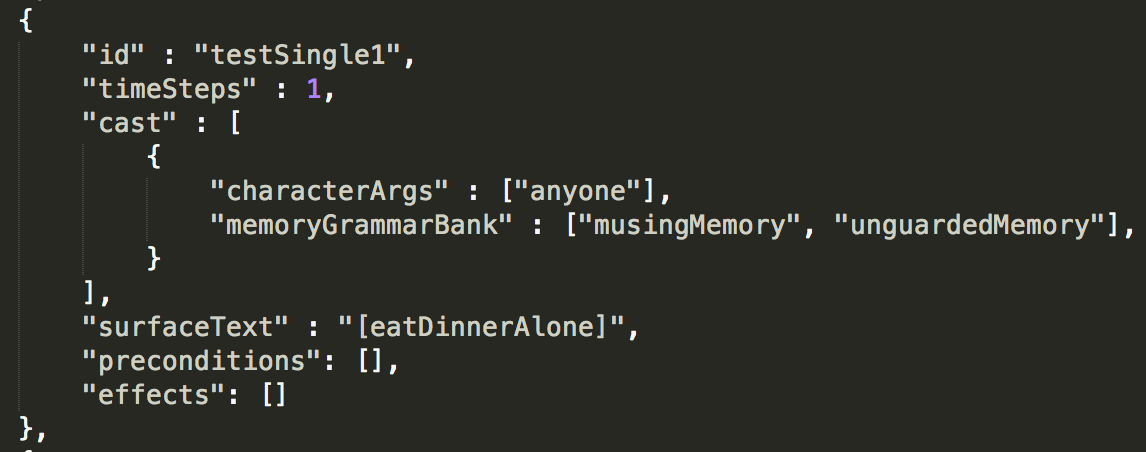

In the current game I’m working on, I take the same scaffolds, but instead of having slots that match a pattern of events provided by the simulation, we give each sub-event in the scaffold pre-conditions it has to pass. These pre-conditions may focus more on story than simulation state–they may use flags or traits that don’t effect the wider game like “hadBadEvent” or “ripeForDistrust”.

The library of scaffolds is therefore also the library of simulation events, which means as the simulation proceeds through time, at any given point we may have one or several scaffolds determining what our future holds (I call this a character’s geas or fate).

The difference between this approach and the earlier one of historical rationalization is that the scaffolds, which might look like:

Timesteps 1-50: make some friends

Timesteps: 51-100: friends gradually end up owing you favors

Timesteps: 101-151: you call in favors to rise to power

ensure that the patterns they encode take place. In contrast, it might be relatively difficult to ensure that such specific arrangements of events happen if we randomly generate events, then try to apply a pattern onto them. For example, if I write only one incredibly specific scaffold for 50 events in timestep sequence using this approach, the downside is that everyone has this scaffold. In the earlier approach, the downside is that hardly anyone meets the state requirements, and thus it doesn’t show up very often.

Caveats

Until you get a rich library of events, everyone will do the same thing.

This isn’t terribly surprising–it’s an outgrowth of closely tying the generated story events to the simulation logic. This means that until your library reaches a critical mass, you’ll be stuck with all your characters doing the same, possibly highly-specific action chains again and again, and until you design a scaffold approach that’s generative and flexible, it’s going to stay that way.

Player interaction can wreck your china shop.

This approach is great when you have total control over the system that’s generating events, but adding a player into the mix can really throw a wrench in the works. If your characters are currently stepping through a series of nested scaffolds which lead to them ascending to the high court and becoming ladies and lords of the land, but the player causes an explosion at timestep 2 that kills them all, you’ve got a serious problem on your hands. Ideally, your scaffold library should be granular and flexible enough to recover from this, but it will take some careful forethought.

This might mean this approach is especially good for generating backstories that take place before player interaction, so you don’t have to handle that case.

Why Is This Cool?

More control, more complex event structures.

Because the scaffolds are calling the shots (and playing a larger role in driving the simulation) you can create more complex structures and guarantee they appear. Whereas it might be statistically difficult to generate a 30-event simulation event sequence in the first approach that conforms to an authored pattern, it’s quite easy to make that happen when the scaffold pre-conditions are the only blockers.

Proceduralized Fate system

Because this runs at the same time (or as!) the simulation, it means at a given point you can know which actions characters are currently “fated” to take. A character might have a scaffold selected for them of Doomed Lover at timestep 2 which won’t play out for a long time, but you can query the system to give intimations of their eventual fate.

Why Not Both?

You don’t have to pick one or the other, of course. You could layer these systems on top of each other, using the “history as process” to generate backstories, then using a simulation to handle character decisions moving forward, and only building out text descriptions on a need-to-know basis when the character interacts with historical accounts or NPC conversation, depending on which character is doing the rendering.

What Are You Using?

For Delve I’m using the second approach, because simulation is not as important to me as generating fully fledged-out, rich event descriptions. My hope is that I can slowly turn the dial up on the simulation by building out pre-conditions, post-conditions, and effects. But the most important thing for me is the memories themselves–specifically, the semantic tags associated with them, and the breadth of generativity I can push without giving myself an unholy authorial burden.

If you want to know more about the full spec for events beyond scaffolds, check out this other blog post. If you’re going to be at GDC 2018, you can also pull me aside and I’ll be more than happy to show you a demo and talk your ear off about it!

…holy shit you read the whole thing? Thanks!